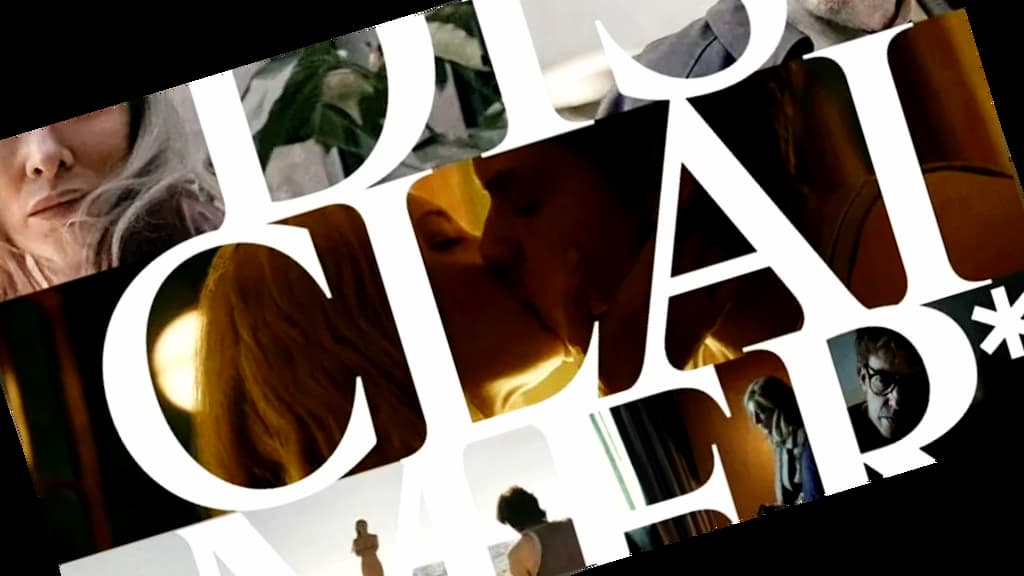

Alfonso Cuarón’s psychological thriller Disclaimer begins as a story about journalism and guilt — but quickly evolves into a sharp reflection on power, narrative control, and truth. As I watched, I couldn’t help but draw a parallel between the manipulation of stories in the show and the way artificial intelligence is reshaping content today. 🤖

In the series, the protagonist — a respected journalist — loses control of her own narrative. Someone else writes “her story,” framing it in a way that feels true, but distorts key facts. And just like that, a false version of reality gains traction. 😱

It’s a powerful reminder: The person (or system) who controls the story… controls what people believe.

Now think about the role of AI in our current information landscape.

Content is being generated faster than ever: news summaries, product descriptions, translations, scripts, marketing copy — all crafted by systems trained on billions of words. These tools are impressive. They’re fluent. They sound human. But: 🤔

- Do they reflect truth?

- Do they understand context?

- Do they respect cultural nuance?

- Can they sense harm?

The answer is: not without human oversight. 🛡️

As a Human Linguistic Quality Assurance (LQA) Specialist, my role is to stand between automation and the audience — not to stop technology, but to guide it. I review AI-generated content not just for language quality, but for ethics, inclusion, intent, and integrity.

Because without this, even a “perfect” translation or sentence can cause misunderstanding or harm. 💔 And in a world where people increasingly trust whatever they read or hear online, that’s a real risk.⚠️

So what does Disclaimer teach us about AI?

It shows us how fragile truth becomes when someone else takes over our narrative.

It reminds us that stories can be powerful tools — or dangerous weapons, depending on who tells them.

And it warns us about the seductive nature of well-packaged falsehoods.

Just like the journalist in the show realizes too late, we must be vigilant about who gets to speak, how, and for whom.

My conclusion?

AI doesn’t understand truth — but humans do. 🧠

That’s why Human Quality Assurance is no longer just a nice-to-have.

It’s a shield. A filter. A line of defense. 🛡️

And in this new era, the job of the Human Linguist Reviewer isn’t just to polish text.

It’s to guard the narrative. 🔒